The Living Product

A thesis on the future of software

The plastic plant

For most of software history, we have been building plastic plants.

Some of them are impressive. Some are beautiful. Some make a lot of money. Some genuinely help people. But they are still plastic plants. They do not grow. They do not heal. They do not reorganize themselves in response to the world.

Every branch was placed there by a human. Every new leaf was debated, specified, designed, and attached manually. When the world changes — when a policy shifts, when users develop new habits, when a competitor moves — the product does nothing. It does not participate in its own development. It waits.

AI has mostly been used to accelerate this paradigm. Cursor, Claude Code, copilots, agentic dev workflows, dark factories — these are real shifts. They matter. They are changing the economics and speed of building software right now. But the underlying model is unchanged: humans determine what should exist. AI helps produce it. The software remains static once shipped.

AI writes the code faster. AI drafts the ticket faster. AI produces mocks faster. AI helps debug faster. But the product itself is still an object humans construct and maintain from the outside. It still does not sense its environment, generate new structure, test adaptations, prune weak growth, or strengthen itself over time.

We keep asking how AI can help us build software faster.

A bigger question is coming: what if software no longer needed to be built at all?

Software is becoming something new

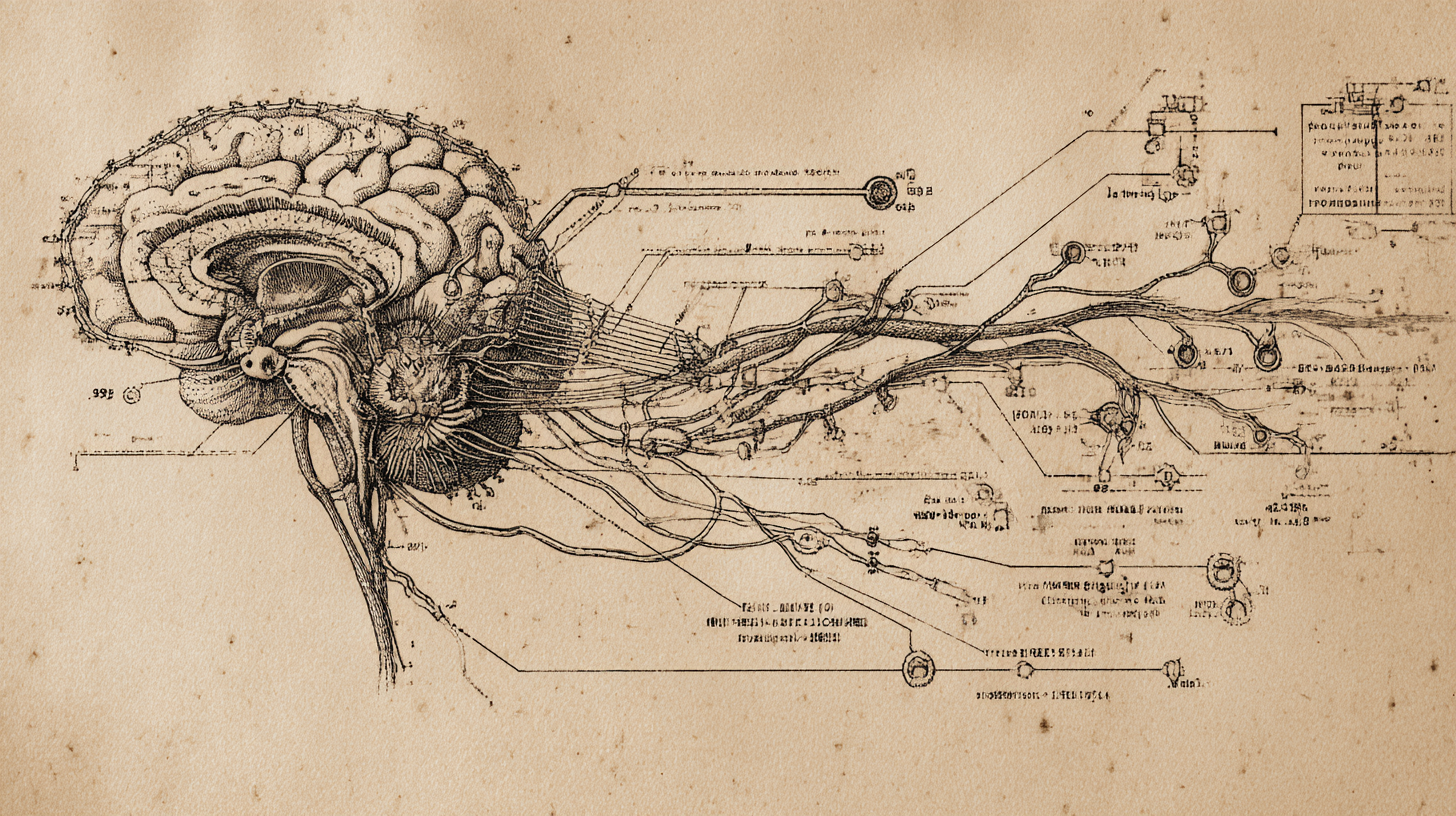

The future of software is not AI making the old model faster. The future is software that behaves less like an inert tool and more like an organism — sensing, adapting, healing, growing, and improving over time.

The job for humans shifts from specifying features to specifying the conditions under which features can emerge on their own.

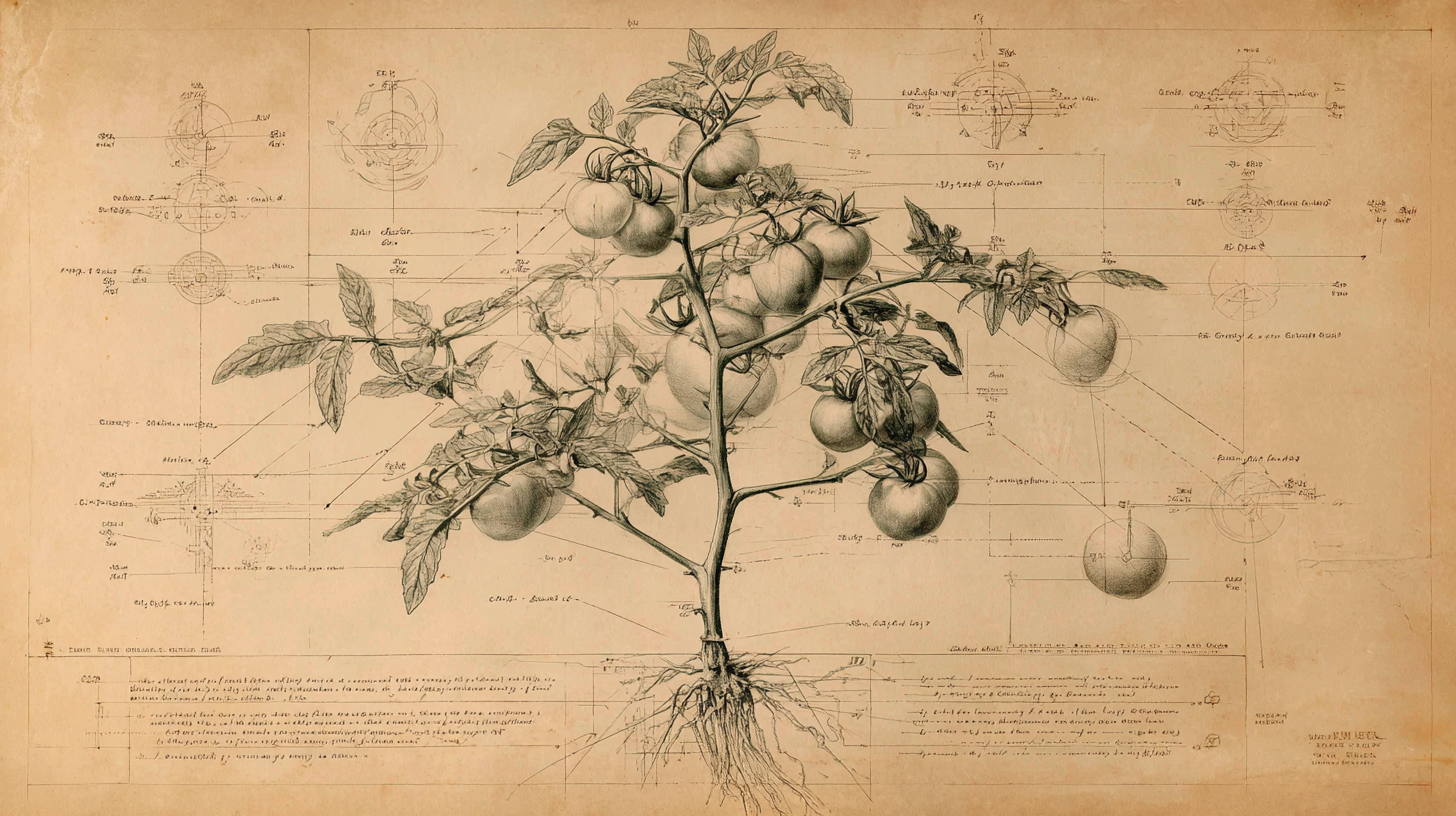

Think about a tomato plant. The seed does not contain a script for every future branch, leaf, or piece of fruit. It contains encoded logic for how the organism responds to conditions — light, water, nutrients, crowding, stress, support. Its actual form emerges through interaction with its environment.

That is the shift. We are moving from building software to cultivating it.

DNA, Signals, Purpose

If the living product is the thesis, this is the working model.

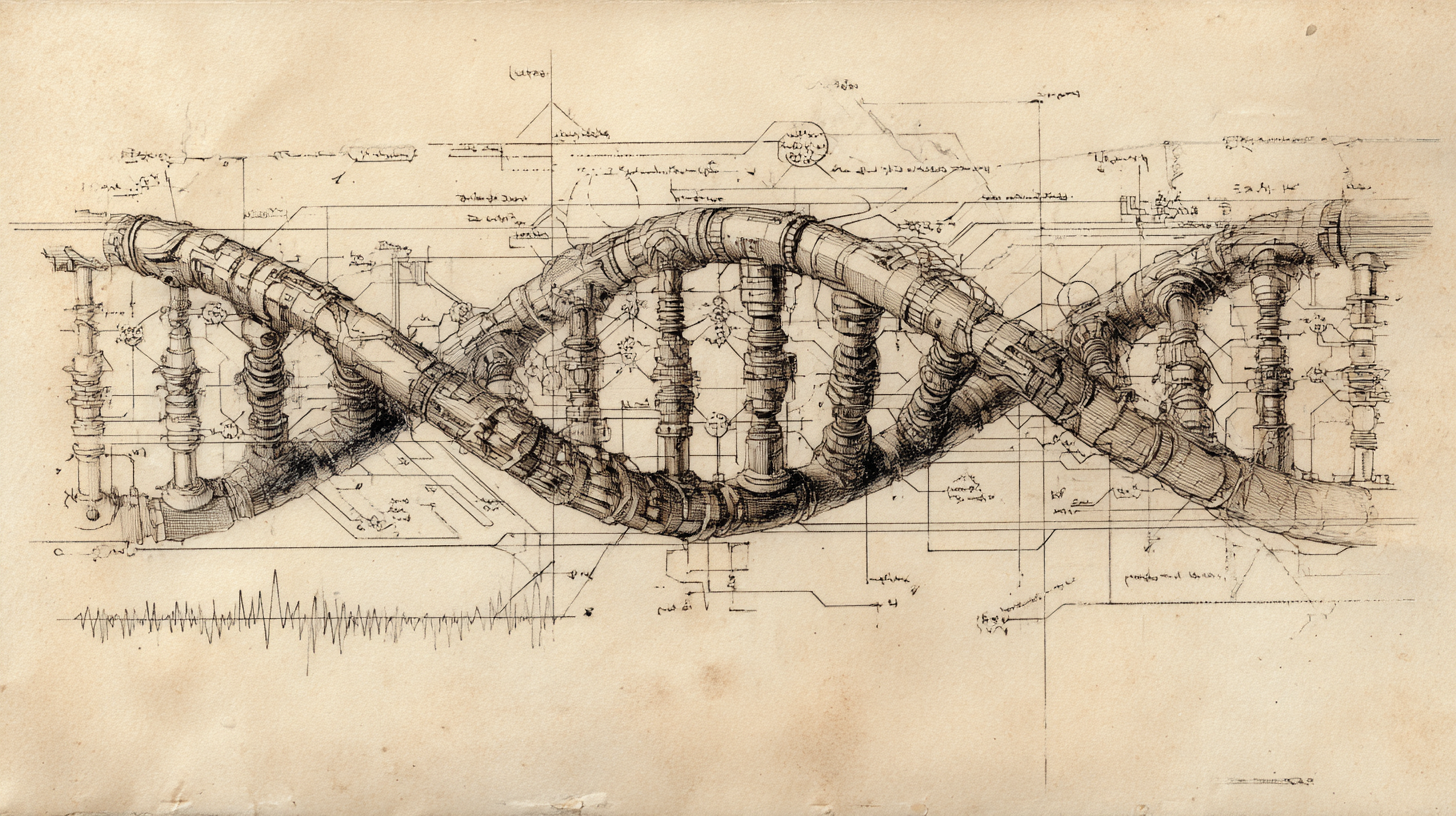

In the old model, the codebase mostly describes current functionality. It is a record of what exists.

In the living-product model, the codebase becomes something more like DNA. It encodes the rules that govern growth. The DNA defines:

- What signals the system pays attention to and what it ignores

- What counts as a problem worth solving

- What kinds of changes it's allowed to make on its own

- How it decides between competing options

- What triggers growth and what triggers pruning

The codebase stops being a record of what the product is. It becomes a set of instructions for what the product is allowed to become.

A tomato plant doesn't grow from a plan. It grows from what it can sense — light, water, temperature, the presence of other plants competing for the same resources. Its environment shapes its form.

A living product works the same way. It grows in response to what it can perceive:

- How users actually behave inside the product, not just what they say they want

- What support teams keep hearing over and over

- What sales is losing deals over

- What competitors just shipped or abandoned

- What changed in regulation, policy, or public expectation

- What the business can actually afford to prioritize right now

The hard part is not collecting more data. The hard part is deciding what counts as signal, what counts as noise, and which parts of reality the system should be taught to pay attention to.

Every team will have access to the same models. The advantage belongs to whoever teaches theirs to see reality more clearly — what to pay attention to, what to ignore, and how to read multiple signals at once. That judgment is harder to build than the software itself, and it's worth more.

This is the most important concept in the framework.

A tomato plant does not need a human being to define its purpose. The purpose is inherited. Through evolution, living organisms are shaped by a hard-coded drive toward survival and reproduction. They do not choose their objective. It is built in.

Software has no such inheritance.

A living product has no built-in understanding of what it should be trying to produce, what kind of growth is healthy, or what outcomes are worth pursuing. Humans have to define that.

That is the purpose. Not the vague mission statement. Not the polished values page. The actual operational definition of what this system is being directed toward.

What outcomes matter? What tradeoffs are acceptable? What does healthy growth look like? What kind of fruit do we want this organism to produce? What are we unwilling to produce, even if the metrics look good in the short term?

In the old model, product leaders spent most of their energy defining features. In the new model, the deeper responsibility is defining purpose. That is where values enter the system.

How a living product works

A living product works as a loop.

Sense

The product pays attention to what is happening around it — not just analytics inside the app, but the real signals outside it too. How users behave. Where they get stuck. What support keeps hearing. What sales keeps hearing. What competitors are releasing. What changed in the market, in policy, or in public opinion.

Interpret

This is the hard part.

Most of what a product sees is messy, incomplete, or misleading. People ask for things that will not actually solve their problem. Teams overreact to loud feedback. Metrics move for reasons that have nothing to do with the product.

So the system has to do more than collect information. It has to figure out what is actually happening, what matters, and what is worth acting on. That means separating signal from noise. It means noticing patterns across different sources. It means recognizing that one support ticket is not important, but the same friction showing up in support, user behavior, and sales conversations probably is.

Grow

Once the system has a clearer view of the opportunity, it can start proposing responses.

Sometimes that might mean a new feature. Sometimes it might mean a simpler flow, a clearer explanation, a different sequence, or a better default. The point is not just to produce more stuff. The point is to find the change that best serves the purpose.

A living product should be able to explore different ways to respond, test them, and move forward with the ones that actually work.

Learn

Then it has to learn from what happened.

Did it notice the right thing? Did the change help in the way we expected? Did it improve the actual purpose of the product, or just make one number go up for a week? Did it fix one problem while creating a new one somewhere else?

Each cycle should make the next one smarter.

Heal & Prune

Living things do not just grow. Healthy ones also repair damage and cut away what no longer serves them.

A living product should catch problems early. Broken flows. Regressions. Slow performance. Abuse. Features that add complexity without adding value. It should repair what it can and remove what no longer deserves to stay.

That matters because bad software usually does not fail all at once. It gets heavier, noisier, and harder to improve. It slowly fills with things nobody would build again if they were starting fresh.

Like a living creature, these stages loop constantly — no kickoff, no end, no backlog, no one deciding it's time to start. The environment changes, and the organism responds.

At Willow, we are already seeing spec-to-feature development happen with AI. We are not at a true dark factory, but the amount of human effort required to move from idea to shipped work is dropping fast. Our figurative light bill is getting pretty low.

What is even more interesting is that our Chief Academic Officer has built the groundwork for something similar in curriculum design. The curriculum is structured in a way that makes it much easier to generate, adapt, and improve lessons with AI without starting from scratch each time.

That does not mean the system is running itself. It means the conditions are starting to exist for software and curriculum to become much more adaptive than the old model allowed.

The human job gets bigger, not smaller

This is where a lot of the AI conversation still gets the future wrong.

In a living-product world, humans do not disappear. But they are no longer doing the same job they did in the old model.

- They are not specifying features. They are defining the rules of growth.

- They are not reading dashboards. They are deciding what signals the system should trust.

- They are not prioritizing tickets. They are defining purpose.

- They are not building roadmaps. They are intervening when the organism produces something locally effective but strategically or ethically wrong.

- They are not in the weeds of their product. They are making the bold bets the data cannot support yet but judgment and intuition says matter.

Part geneticist. Part gardener. Part founder. Part moral authority. Part parent.

No single title fully captures that. Which is exactly what you would expect if the responsibility itself is new.

And that matters because the organism will be very good at optimization. It will not be very good at meaning, and it will have no innate sense of responsibility for how to use its power well. It will not naturally orient toward what is best for humanity. It will not instinctively protect against harm, preserve dignity, or recognize when growth has become poisonous. It will optimize toward the purpose we define.

That is why the human role becomes more important, not less.

Optimization is not vision. It does not tell you what future is worth creating, what tradeoffs are acceptable, or what kinds of growth should be rejected even if they perform well. That remains human work.

First steps

This does not begin with fully autonomous software.

It begins with a series of concrete steps that move your product from static to adaptive.

Pull your signals into one place

Aggregate your logs, product analytics, support tickets, sales notes, and user behavior so they can be read together instead of sitting in separate tools.

Start listening outside the product

Create systems that pull in the outside reality shaping your users: relevant news, policy changes, public opinion, competitor releases, and other shifts in your domain.

Ask for more direct feedback

Roll out short, targeted feedback requests inside the product. Not giant surveys. Small questions at the right moments.

Build tools that look for needs, not just requests

Take all of those inputs and create systems that look for recurring problems, unmet needs, and emerging opportunities. The goal is not to collect more noise. It is to surface what actually matters.

Create realistic simulated users

Build detailed versions of your ideal customer profiles with believable goals, preferences, frustrations, and behaviors. Use them to pressure-test flows, react to new ideas, and expose obvious weaknesses before you ship.

Start with narrow loops

Do not try to make the whole product adaptive at once. Pick one area. One workflow. One kind of user problem. Build the loop there first.

Get serious about pruning

Most teams are obsessed with shipping and weak at removal. Start building the habit of cutting features, steps, and complexity that no longer earn their keep.

Define purpose more precisely

If your team cannot clearly say what the product should optimize for, the rest of this will go sideways. Better signals and better generation only matter if they are pointed at the right thing.

These are real, bite-sized steps. None require magic. But taken together, they start changing the nature of the product.

Open questions

The opportunity is real. So are the hard questions.

How do we know which signals to trust?

Most products already have too much information. The challenge is not access. It is judgment.

How good can simulated users actually get?

At what point are they helpful, and at what point do they start giving us false confidence?

How do we prevent optimization toward the wrong purpose?

If the system gets very good at improving the wrong thing, it can do real damage.

What should remain permanently human?

Maybe purpose definition. Maybe final approval in certain domains. Maybe more than we think.

How much autonomy should a product earn before we trust it more?

This is probably not binary. But most teams do not yet have a good model for where the boundaries should sit.

How do we measure health, not just movement?

A product can become more active, more complex, and more responsive without becoming better.

How do we keep adaptive systems from bloating over time?

Growth without pruning does not create something living and healthy. It creates something heavy and unstable.

These are not reasons to dismiss the idea.

They are the questions serious builders should already be grappling with.

For most of software history, we treated products like objects: assembled, shipped, maintained.

That era is ending.

The next generation of software will not be built. It will be grown. It will sense its environment. It will adapt. It will heal. It will prune its own excess.

And once software can grow, the central question changes.

It is no longer: What should we build next?

It becomes: What are we cultivating — and what kind of fruit do we want it to bear?

That question is not a technical one. It is a moral one. The leaders who matter most will be the ones who can design the DNA, shape the environment, define the purpose — and take responsibility for what grows.